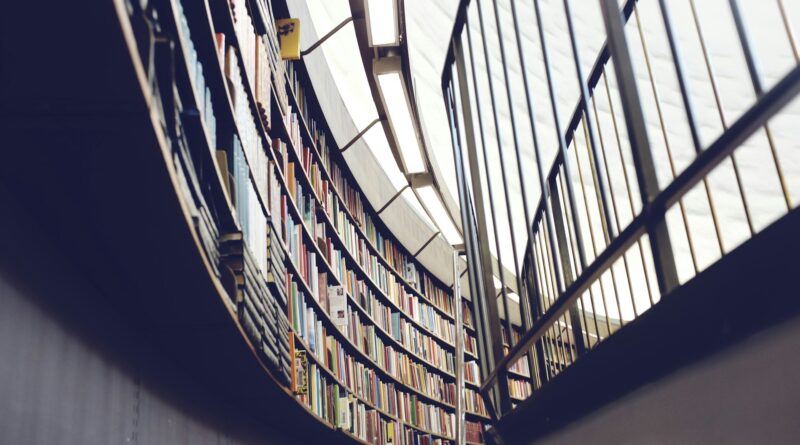

Universities Are Judged by Rankings. But Who Judges the Rankings?

Global university rankings have become one of the most influential tools in higher education. Governments use them to shape internationalization policies, universities use them for strategic planning and reputation management, and students often rely on them when making educational choices. In many countries, rankings are no longer simply reference tools. They increasingly function as policy instruments. But an important question is often overlooked: who evaluates the rankings themselves?

Read More