Universities Are Judged by Rankings. But Who Judges the Rankings?

Universities Are Judged by Rankings. But Who Judges the Rankings?

Güleda Doğan

Global university rankings have become one of the most influential tools in higher education. Governments use them to shape internationalization policies, universities use them for strategic planning and reputation management, and students often rely on them when making educational choices. In many countries, rankings are no longer simply reference tools. They increasingly function as policy instruments.

But an important question is often overlooked: who evaluates the rankings themselves?

—An important question is often overlooked: who evaluates the rankings themselves?—

Today, global rankings influence decisions about funding, hiring, promotion, mobility programs, institutional partnerships, and even immigration policies. Yet despite their growing power, many rankings continue to suffer from serious methodological and governance problems. Transparency is often limited, reputation surveys dominate important indicators, and universities with very different missions and contexts are reduced to a single numerical position. As Hazelkorn (2015) argues, rankings provide a quick and simplified comparison mechanism, but they also rely on arbitrary weights and narrow data infrastructures that can distort institutional realities.

These concerns have contributed to the emergence of the concept of responsible ranking. Inspired by broader discussions on responsible research assessment, responsible ranking argues that rankings should not only produce measurable outcomes, but should also follow principles such as transparency, methodological rigor, accountability, contextual sensitivity, and fairness. The discussion is closely connected to initiatives such as DORA (2012), the Leiden Manifesto (Hicks et al., 2015), and the growing international movement for reforming research assessment.

The concept has gained increasing visibility particularly through the work of CWTS and INORMS. In 2017, CWTS introduced its “Ten Principles for the Responsible Use of University Rankings” (Waltman et al., 2017). INORMS later developed a broader framework for fair and responsible university assessment, emphasizing governance, transparency, rigor, and measuring what truly matters (Gadd et al., 2021; INORMS Research Evaluation Working Group, 2022). These initiatives reflect a broader shift in higher education policy discussions: rankings should not simply be accepted as neutral representations of quality.

In a recent study published in Reflektif Journal of Social Sciences, I evaluated 14 international university rankings according to three major responsible ranking frameworks: the CWTS principles, the Berlin Principles, and the INORMS principles (Doğan, 2025). The study was conducted as part of a TÜBİTAK-funded research project titled Evaluating International University Rankings in the Context of Responsible Research (Project No: 121C400). The analysis included widely used rankings such as QS, THE, ARWU, US News, SCImago, and Leiden Ranking.

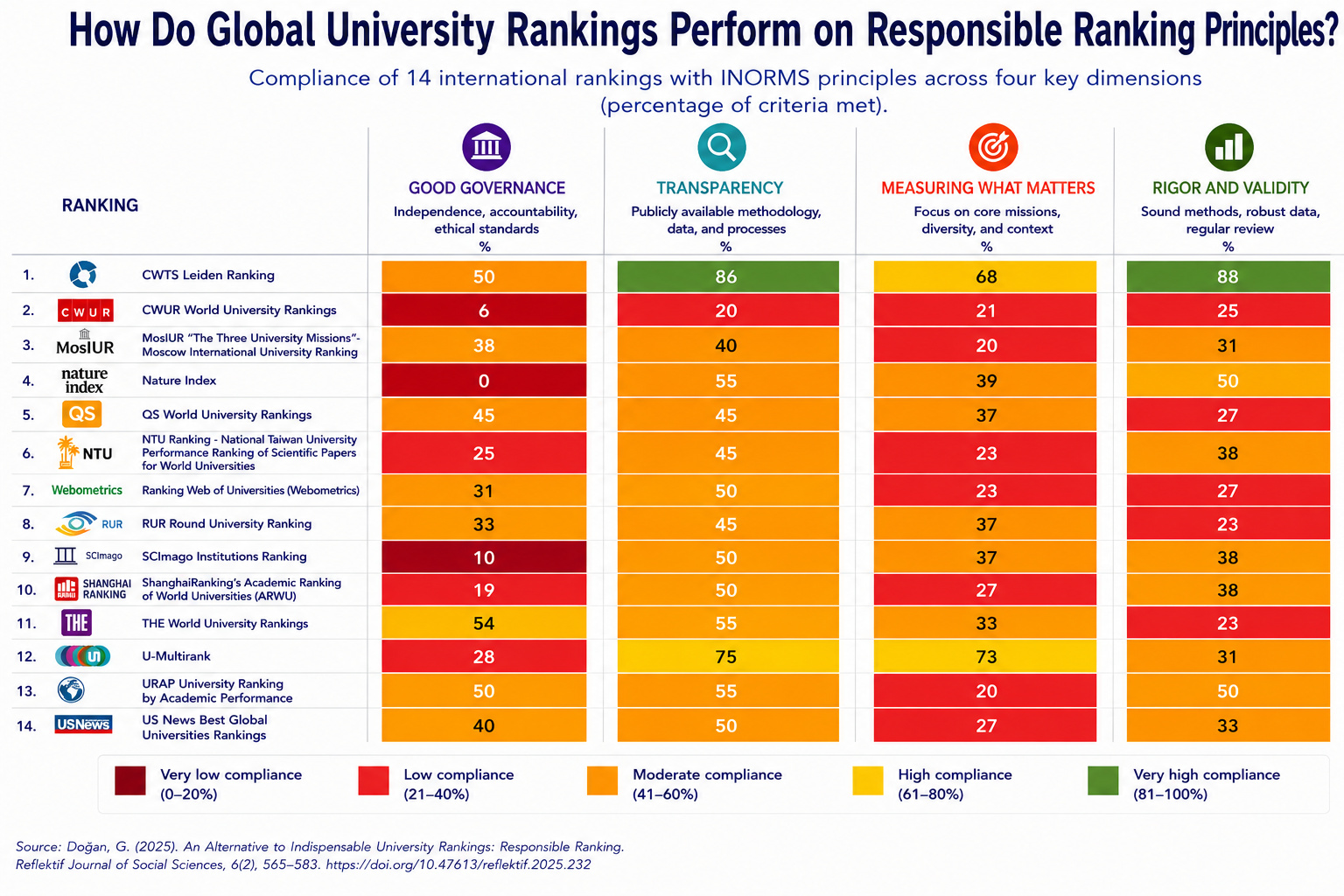

As shown in Figure 1, rankings differ substantially in terms of governance, transparency, and methodological rigor.

Figure 1. Compliance of 14 international university rankings with INORMS responsible ranking principles across four dimensions: governance, transparency, measuring what matters, and rigor. Adapted from Doğan (2025).

Among all rankings examined, CWTS Leiden Ranking demonstrated the strongest alignment with responsible ranking principles, especially in terms of transparency and methodological rigor. One important reason for this is that Leiden avoids producing a single composite score. Instead of collapsing institutional performance into one number, it allows users to examine universities through multiple indicators and perspectives. This approach aligns closely with broader critiques of one-dimensional assessment systems discussed in the responsible metrics literature.

The now discontinued U-Multirank also performed relatively well in several categories, particularly regarding diversity and contextualization. Unlike many rankings that force institutions into a universal hierarchy, U-Multirank attempted to account for institutional diversity and different university missions.

In contrast, some of the world’s most visible rankings, especially QS and THE, showed relatively weak alignment with responsible ranking principles. Their heavy reliance on reputation surveys raises concerns about validity, representativeness, and methodological transparency. Similar criticisms have also been raised in earlier studies examining global rankings and their methodological inconsistencies (Brankovic, 2021a; Gadd & Holmes, 2020).

In addition, the large year-to-year fluctuations often observed in ranking positions suggest that ranking changes may not necessarily reflect real institutional performance changes. Rankings can create an illusion of precision while masking substantial uncertainty and methodological instability.

ARWU, despite its historical prestige and influence, also showed important limitations. Its reliance on Nobel prizes, highly cited researchers, and publications in journals such as Nature and Science tends to favor already resource-rich institutions and large research-intensive universities. This creates structural advantages that are difficult for many universities around the world to overcome and reinforces global inequalities within higher education systems.

The issue becomes even more important when rankings directly shape policy decisions.

In Türkiye, for example, global rankings are increasingly embedded into higher education policies and institutional regulations. Several national programs require universities to appear within specific ranking thresholds for eligibility in mobility, funding, and recruitment schemes. Similar practices can also be observed internationally. Rankings increasingly influence scholarship programs, international recruitment, institutional partnerships, and strategic funding decisions.

The problem is not simply that rankings are used. The problem is that rankings are often treated as objective and neutral representations of quality, despite significant methodological limitations.

This creates a form of “reactivity,” a concept discussed by Espeland and Sauder (2007), where universities begin changing their behavior in response to rankings rather than educational or societal priorities. Institutions may redirect resources toward indicators that improve visibility in rankings while neglecting activities that are socially valuable but less measurable. As Brankovic (2021b) argues, rankings have become so deeply embedded in academic culture that universities often continue reproducing systems they simultaneously criticize.

As rankings become more influential, their unintended consequences become more visible. A system designed to simplify comparison can gradually reshape the behavior of entire institutions.

This does not necessarily mean rankings should disappear completely. Rankings provide visibility, attract attention to higher education systems, and offer comparative information that many stakeholders find useful. However, if rankings are unavoidable, then their use should at least be responsible.

Responsible ranking does not mean creating a “perfect” ranking. Rather, it means acknowledging limitations, avoiding simplistic comparisons, improving transparency, and recognizing institutional diversity. It also means understanding that universities cannot be reduced to a single score or position.

Perhaps the most important shift is conceptual. Instead of asking only “Which university is higher in the rankings?”, we should also ask:

What exactly is being measured?

Who benefits from these indicators?

Which institutions are disadvantaged?

And what kinds of academic behavior do rankings encourage?

The future of higher education assessment should not depend solely on visibility, prestige, and competition. It should also consider responsibility, context, diversity, and public value.

Because ultimately, rankings do not simply measure universities.

They also shape them.

References

Brankovic, J. (2021a). The Absurdity of University Rankings. LSE Impact Blog. https://blogs.lse.ac.uk/impactofsocialsciences/2021/03/22/the-absurdity-of-university-rankings/

Brankovic, J. (2021b). Academia’s Stockholm syndrome: The ambivalent status of rankings in higher education (research). International Higher Education, 107, 11–12. https://ihe.bc.edu/pub/k39jq0vo/release/1

Doğan, G. (2025). Vazgeçilemeyen Üniversite Sıralamaları için Bir Alternatif: Sorumlu Sıralama [An Alternative to Indispensable University Rankings: Responsible Ranking]. Reflektif Journal of Social Sciences, 6(2), 565–583. https://doi.org/10.47613/reflektif.2025.232

DORA. (2012). San Francisco Declaration on Research Assessment. https://sfdora.org/read/

Espeland, W. N., & Sauder, M. (2007). Rankings and reactivity: How public measures recreate social worlds. American Journal of Sociology, 113(1), 1–40. https://doi.org/10.1086/517897

Gadd, E., Holmes, R., & Shearer, J. (2021). Developing a method for evaluating global university rankings. Scholarly Assessment Reports, 3(1). Article 1. https://doi.org/10.29024/sar.31

Gadd, E., & Holmes, R. (2020). Rethinking the Rankings. ARMA. https://arma.ac.uk/rethinking-the-rankings/

Hazelkorn, E. (2015, January 28). The Obsession with Rankings in Tertiary Education: Implications for Public Policy. World Bank. https://doi.org/10.13140/2.1.3895.8400

Hicks, D., Wouters, P., Waltman, L., de Rijcke, S. ve Rafols, I. (2015). Leiden Manifesto for Research Metrics. http://www.leidenmanifesto.org/

INORMS Research Evaluation Working Group. (2022). Fair and responsible university assessment: Application to the global university rankings and beyond, Version 5. July 2022. https://inorms.net/wp-content/uploads/2022/07/principles-for-fair-and-responsible-university-assessment-v5.pdf

Waltman, L., Wouters, P., & van Eck, N. J. (2017). Ten Principles for the Responsible Use of University Rankings. CWTS. https://www.cwts.nl/blog?article=n-r2q274

Cite this article in APA as: Doğan, G. (2026, May 14). Universities are judged by rankings. But who judges the rankings? Information Matters. https://informationmatters.org/2026/05/universities-are-judged-by-rankings-but-who-judges-the-rankings/

Author

-

View all posts

View all postsGüleda Doğan is a researcher at Hacettepe University, Department of Information Management. She has a background in Statistics and completed her PhD on university rankings. Her research focuses on university rankings, research evaluation, bibliometrics, scholarly communication, open science, open access, research integrity, and questionable publishing practices.

Between 2022 and 2024, she led a national project on promoting responsible approaches to university rankings. She is also involved in a TÜBİTAK-funded research project focusing on questionable publishing and research integrity.

She has 12 years of editorial experience in a Turkish journal in the field of library and information science and has served on the editorial board of ARIST since 2022. She has contributed to EU-funded projects on open access policies and has provided consultancy on open access, open science, and related issues for institutions such as the Council of Higher Education and The Scientific and Technological Research Council of Türkiye.

Güleda Doğan currently serves on the Board of Directors of FORCE11 and is a member of the ASIS&T Professional Development Committee. She is also a co-founder of the Scholarly Communication Network, which aims to support collaboration and communication among early-career scholars.