Expert Colleague or Dancing Bear? The Mixed Responses to AI in Digital Humanities Research

Expert Colleague or Dancing Bear? The Mixed Responses to AI in Digital Humanities Research

Rongqian Ma, Meredith Dedema, Andrew Cox

Imagine you are a historian facing five million pages of 17th-century records, a task that would take an entire lifetime to read manually. As more documents continue to accumulate across archives and digital collections, the scale of the material quickly exceeds what any individual researcher can realistically process. In that situation, what would you do? Some scholars might see generative artificial intelligence (AI) as a promising way to cope with this kind of overload, helping researchers sort, summarize, and navigate vast amounts of material. Others might be more reluctant, questioning whether such systems can be trusted with work so closely tied to evidence appraisal and historical interpretation.

—The big question is no longer just whether AI is impressive, but whether it is becoming a genuine research partner, a useful tool, or, for some, still more of a “dancing bear” than a trusted collaborator—

A recent study explored how scholars in the digital humanities research domain are navigating this new and complex landscape. Digital humanities is an interdisciplinary research field where scholars employ digital tools and computational methods to investigate cultural and humanities questions.

Drawing on an international survey of 76 respondents and 15 in-depth interviews, the study found that scholars are not simply embracing or rejecting these tools. Instead, they are adopting AI systems cautiously, using them to speed up routine tasks, explore ideas, and build new skills, while navigating problems of accuracy, authorship, and what these systems might mean for the future of scholarship. The big question is no longer just whether AI is impressive, but whether it is becoming a genuine research partner, a useful tool, or, for some, still more of a “dancing bear” than a trusted collaborator. By tracing these mixed reactions and everyday practices, the study offers a grounded look at how AI is beginning to reshape academic life.

Many scholars are already putting AI to work for everyday tasks like brainstorming research questions (62% of survey respondents) or writing computer code (46% of survey respondents). For those who are not expert programmers, the technology acts as a coach that handles the technical details of their work. This allows researchers to think at a higher level about their research without worrying about every comma in a line of code. Beyond coding, scholars use these tools to clean up messy text from old scans and even create synthetic data for specific projects. These practices suggest that for many, the technology is already becoming a “sharpened toolbox” for modern research.

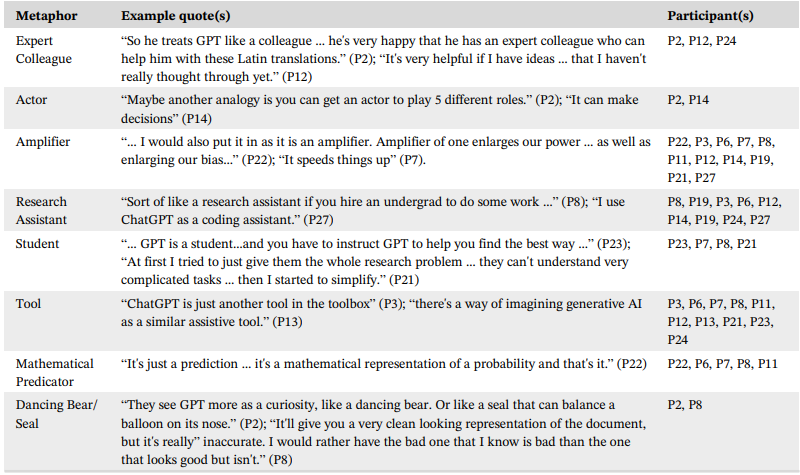

At the same time, scholars use a wide range of imaginative metaphors to describe their relationships with generative AI (Table 1). Some see it as a research assistant or an expert colleague capable of helping with difficult interpretive tasks. Others are more skeptical, comparing it to a “dancing bear,” which is impressive to watch, but not always reliable or competent. These comparisons suggest a field still negotiating how much trust to place in machine-generated insight and what, exactly, AI can add to humanistic work.

Table 1. Metaphors used by interview participants to describe generative AI.

This negotiation process is not without concerns and doubts. Scholars perceive several risks of letting machines do the heavy lifting in research. One major concern is the tendency of these systems to hallucinate or confidently present false information as if it were fact. There is also a deeper concern about the cultural tendencies and biases built into AI models. Because many are trained largely on North American and English-language data, they can carry particular worldviews and risk misrepresenting, flattening, or marginalizing other cultural and linguistic contexts.

Beyond technical risks, many scholars fear that over-reliance on AI could threaten the very foundations of humanities research. If an algorithm takes over the work of interpretation, what becomes of the researcher’s expertise, judgment, and role? These anxieties shape not only whether the technology is adopted, but how it is carefully managed within the scholarly community.

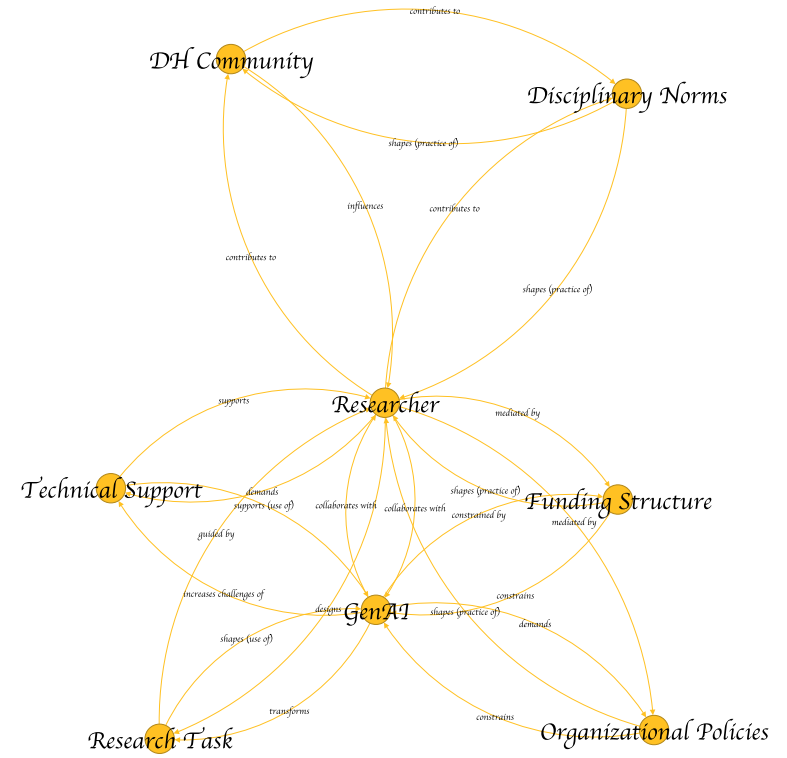

Such mixed practices, thoughts, and worries are coming together in an unsettled network (see below), with generative AI emerging as a significant presence in the digital humanities. What the network shows is a field in motion: scholars, technologies, and institutional settings are all adjusting to one another as GenAI enters research workflows.

From an actor-network perspective, change in digital humanities unfolds gradually, through a web of small adjustments as researchers experiment with GenAI, respond to institutional resources and constraints, as well as negotiating disciplinary norms. Over time, these shifts can reshape research practices, update infrastructures, and alter expectations about what scholarship should be. They also create space for new forms of collaboration between scholars and GenAI, especially in interpretation, creativity, and other core research activities. Seen this way, GenAI is not just a tool but a possible partner in inquiry, one that may expand the possibilities of digital humanities even as it blurs the line between human judgment and machine reasoning.

As research moves deeper into the digital age, the real question is no longer whether AI will enter scholarly work. It already has. The question is what kind of presence AI will become. Will it remain a sharpened toolbox, become a trusted partner, or prove to be more spectacle than substance? The future of research will be shaped not by the AI technology alone, but by the choices scholars and institutions make about what counts as knowledge, whose judgment matters, and how much of human inquiry we are willing to hand over to the machine.

This article is a translation of: Ma, R., Dedema, M., & Cox, A. (2026). A dancing bear, a colleague, or a sharpened toolbox? The cautious adoption of generative artificial intelligence technologies in digital humanities research. Journal of the Association for Information Science and Technology. https://doi.org/10.1002/asi.70066.

Cite this article in APA as: Ma, R., Dedema, M., & Cox, A. (2026, April 14). Expert colleague or dancing bear? The mixed responses to AI in digital humanities research. Information Matters. https://informationmatters.org/2026/04/expert-colleague-or-dancing-bear-the-mixed-responses-to-ai-in-digital-humanities-research/

Authors

-

View all posts Assistant Professor of Information and Library Science

Rongqian Ma is an Assistant Professor of Information and Library Science at Luddy School of Informatics, Computing, and Engineering of Indiana University Bloomington (USA). Her research interests include digital humanities, scholarly information practice, and social studies of AI. She received the PhD in Library and Information Science from the University of Pittsburgh.

-

-