Can AI Really Understand Scientific Novelty? Insights from a New Benchmark

Can AI Really Understand Scientific Novelty? Insights from a New Benchmark

Wenqing Wu, Yi Zhao, Yuzhuo Wang, Siyou Li, Juexi Shao, Yunfei Long, Chengzhi Zhang

In academic research, novelty is one of the most important criteria for publication. A paper is expected to contribute something new, whether a method, a dataset, or a theoretical insight. But identifying novelty is not straightforward. Even experienced reviewers may disagree, and the rapid growth of scientific publications has made the task increasingly difficult.

As the volume of submissions continues to rise, the peer review system faces growing pressure. This has sparked interest in whether artificial intelligence, particularly large language models (LLMs), can assist in evaluating research novelty. But before we can rely on AI for this task, a fundamental question must be answered: do LLMs actually understand novelty?

—Do LLMs actually understand novelty?—

The missing piece: how to evaluate novelty evaluation

Recent studies have shown that LLMs can generate peer review text and provide seemingly reasonable feedback. However, most existing work evaluates these outputs using surface-level metrics such as ROUGE or BLEU, or relies on other LLMs as judge.

These approaches have two major limitations:

- They focus on lexical similarity, rather than whether the evaluation is semantically correct

- They treat the review as a whole, without isolating specific aspects such as novelty

As a result, it remains unclear whether LLMs genuinely evaluate novelty, or simply produce fluent, review-like text.

A new benchmark for novelty: NovBench

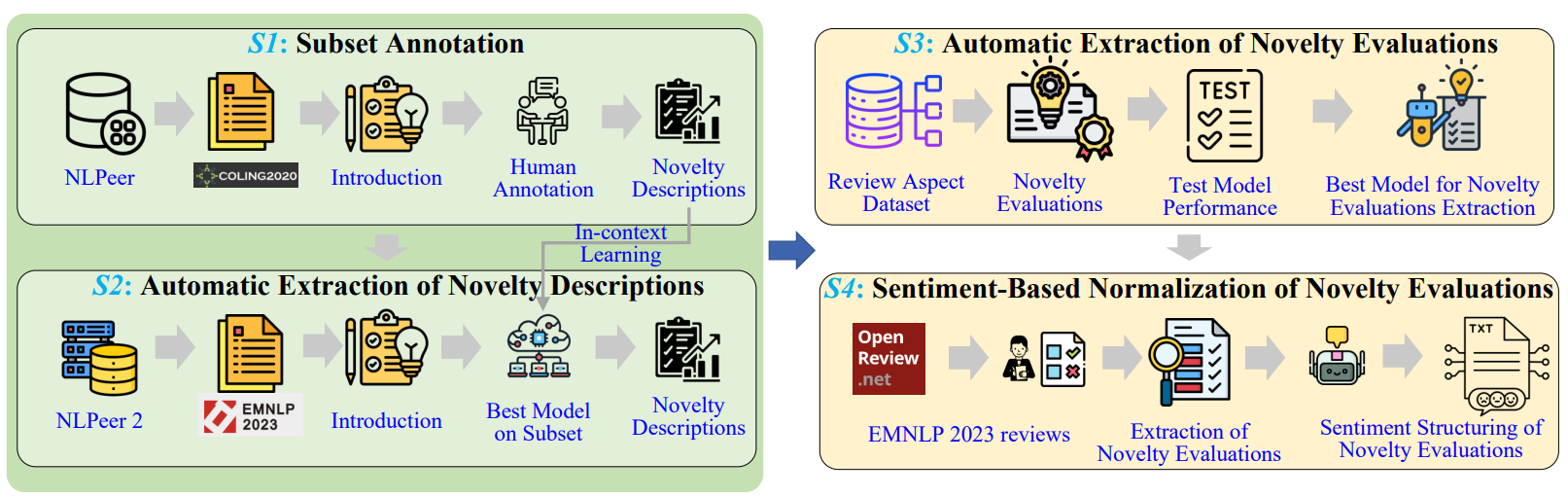

Figure1. The pipeline for constructing NovBench, consisting of four stages.

To address this gap, our work introduces NovBench, the first large-scale benchmark specifically designed to evaluate LLMs’ ability to assess scientific novelty. The Figure 1 shows the construction workflow.

The key idea behind NovBench is to compare two perspectives:

- What authors claim is novel (from paper introductions)

- What reviewers evaluate as novel (from peer review reports)

The dataset contains 1,684 paper–review pairs from a leading NLP conference, making it possible to systematically study how well models align with human judgments.

This design captures an essential aspect of scientific evaluation: novelty is not only claimed by authors, but also interpreted by reviewers.

Looking beyond scores: a four-dimensional framework

Instead of relying on traditional metrics, we propose a four-dimensional evaluation framework to assess the quality of LLM-generated novelty evaluations:

- Relevance – Does the model correctly understand the novelty described in the paper?

- Correctness – Does its judgment align with human reviewers?

- Coverage – Does it capture all key novelty points?

- Clarity – Is the evaluation clearly and effectively expressed?

This framework allows us to move from “Does it sound like a review?” to “Is it actually a good evaluation of novelty?”

What we found: strong language, limited understanding

Our experiments reveal a nuanced picture of LLM capabilities.

On one hand, models perform well in terms of clarity. They can generate fluent, well-structured evaluations that resemble human-written reviews. They also identify the main contributions of a paper with reasonable accuracy.

On the other hand, deeper issues emerge:

- Limited understanding of novelty: Models often capture high-level ideas but struggle with fine-grained distinctions

- Incomplete coverage: When a paper has multiple contributions, models tend to focus on only a subset

- Bias in evaluation: General models tend to produce overly positive feedback, while specialized models may become overly critical

- Instruction-following failures: Some fine-tuned models fail to follow evaluation formats or guidelines

Overall, the results suggest that LLMs are better at expressing novelty than understanding it.

Why this matters for peer review

Novelty assessment is central to scientific decision-making. If novelty is misjudged, important contributions may be overlooked, and less impactful work may be overvalued.

Our findings highlight an important implication:

LLMs should not be viewed as replacements for human reviewers.

Instead, they can serve as supporting tools, helping to:

- Summarize novelty claims

- Provide initial evaluations

- Highlight potential strengths and weaknesses

Human reviewers, in turn, remain essential for deeper reasoning, contextual judgment, and critical analysis.

The road ahead

This work represents an initial step toward understanding how AI can assist in evaluating scientific novelty. Several directions remain open for future research:

- Extending evaluation beyond introductions to full papers

- Exploring more advanced prompting or multi-agent systems

- Improving models’ ability to capture diverse types of novelty

More broadly, the challenge is not just improving model performance, but developing reliable and interpretable evaluation frameworks that align with human judgment.

As AI continues to evolve, its role in peer review will likely grow. The key question is not whether AI can participate in the process, but how it can do so in a way that complements human expertise rather than replacing it.

This work was accepted by Findings of ACL 2026. For more details, please see the arXiv version: Wu, W., Zhao, Y., Wang, Y., Li, S., Shao, J., Long, Y., & Zhang, C. (2026). NovBench: Evaluating Large Language Models on Academic Paper Novelty Assessment. arXiv preprint arXiv:2604.11543.

Wenqing Wu is a PhD student at the School of Economics and Management, Nanjing University of Science and Technology in China. His research interests include natural language processing, novelty evaluation of academic papers and peer review text mining.

Yi Zhao is a lecturer at the School of Management, Anhui University, China. He holds a PhD in Management from Nanjing University of Science and Technology and was a Visiting Scholar in the Department of Library and Information Science at Yonsei University. He has published more than 10 articles, including JASIST, IPM, JOI, SCIM, TFSC, etc. His research primarily focuses on team science, bibliometrics, and scientific text mining, with a particular interest in exploring the impact of artificial intelligence (AI) on scientific collaboration, gender equality, and scientific evaluation.

Yuzhuo Wang is an associate professor at the School of Management, Anhui University, China. She holds a PhD in Management from Nanjing University of Science and Technology and was a Visiting Scholar in the Department of Library and Information Science at Yonsei University. She has published more than 10 articles, including IPM, JOI, SCIM, etc. Her research primarily focuses on text mining and scientometrics.

Siyou Li is a PhD student at the School of Electronic Engineering and Computer Science, Queen Mary University of London in United Kingdom. His research interest is natural language processing.

Juexi Shao is a PhD student at the School of Electronic Engineering and Computer Science, Queen Mary University of London in United Kingdom. His research interest is natural language processing.

Yunfei Long is a senior lecturer at the School of Electronic Engineering and Computer Science, Queen Mary University of London, United Kingdom. He holds a PhD in Nautral language processing from Hong Kong Polytechnic University. He has published more than 10 articles, including JASIST, IPM, ACL, EMNLP, etc. His research primarily focuses on Natural Language Processing, Language Modeling, Neuro-Cognitive Computation, Affective computing.

Cite this article in APA as: Wu, W., Zhao, Y., Wang, Y., Li, S., Shao, J., Long, Y., Zhang, C. (2026, May 5). Can AI really understand scientific novelty? Insights from a new benchmark. Information Matters. https://informationmatters.org/2026/04/can-ai-really-understand-scientific-novelty-insights-from-a-new-benchmark/

Authors

-

View all posts

Chengzhi Zhang is a professor at iSchool of Nanjing University of Science and Technology NJUST. He received PhD degree of Information Science from Nanjing University, China. He has published more than 100 publications, including JASIST, IPM, Aslib JIM, JOI, OIR, SCIM, ACL, NAACL, etc. He serves as Editorial Board Member and Managing Guest Editor for 10 international journals Patterns, IPM, OIR, Aslib JIM, TEL, IDD, NLE, JDIS, DIM, DI, etc. and PC members of several international conferences ACL, IJCAI, EMNLP, NAACL, AACL, IJCNLP, NLPCC, ASIS&T, JCDL, iConference, ISSI, etc. in fields of natural language process and scientometrics. His research fields include information retrieval, information organization, text mining and nature language processing. Currently. He is focusing on scientific text mining, knowledge entity extraction and evaluation, social media mining. He was a visiting scholar in the School of Computing and Information at the University of Pittsburgh and in the Department of Linguistics and Translation at the City University of Hong Kong.

-

View all posts

I am a PhD student at the School of Economics and Management, Nanjing University of Science and Technology in China. My research interests include natural language processing, novelty evaluation of academic papers and peer review text mining.