Ethical Dilemma in Artificial Intelligence: A Focus on Natural Language Processing bias

Ethical Dilemma in Artificial Intelligence: A Focus on Natural Language Processing Bias

Oladipo Sunday

The human race and communication are inseparable. From oral communication to written communications, man has attained mastery in a lot of ways. However, the need for translating this success to machines so we can conveniently communicate man to machine and machine to man in real time has created Natural Language Processing.

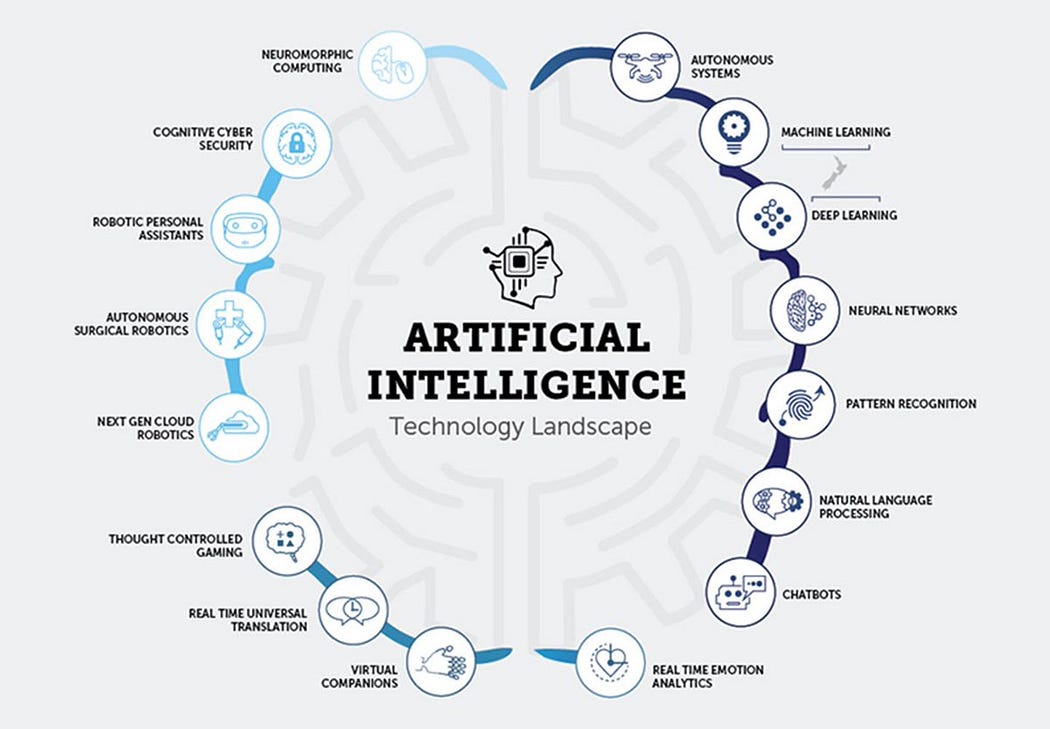

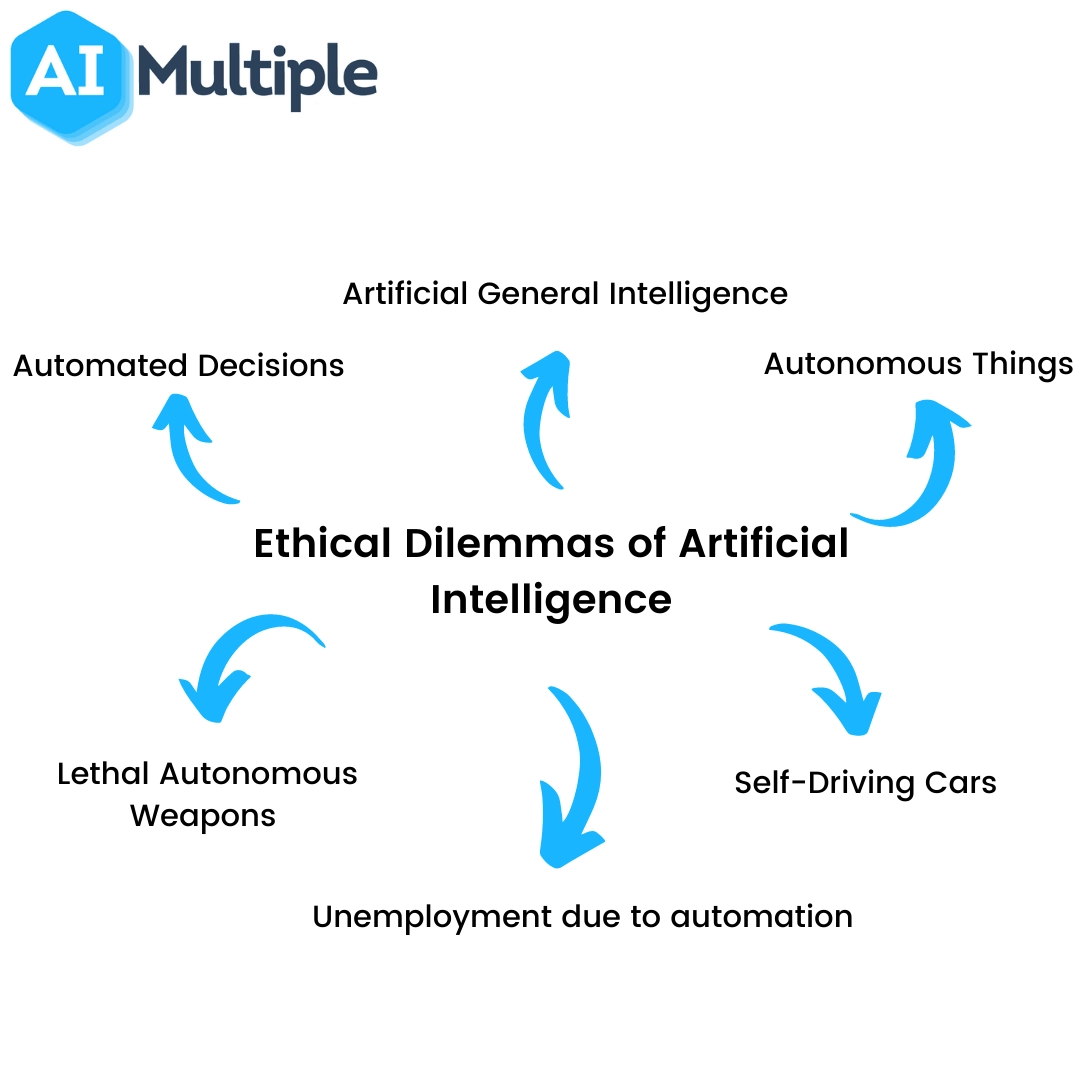

Artificial intelligence and machine learning enrich natural language processing. Natural Language Processing is involved in much of the AI technology landscape. The question of authorship, copyright, or intellectual property (IP) as it concerns humans, algorithms and machines arises often. Ethical dilemma is a common problem for humans, but this is as true for humans as it is for machines. Hence, machines need to be enhanced to make the optimal decision in the face of ethical dilemma.

—Ethical dilemma is a common problem for humans, but this is as true for humans as it is for machines.—

The trio of Artificial Intelligence, Machine Learning, and Natural Language Processing

The trio of Artificial Intelligence, Machine Learning, and Natural Language Processing are powerful technologies that are revolutionizing the human society at an alarming pace from chatbots, sentiment analysis, robotics, smart homes, smart cities and more. While AI mimics the human brain, machine learning provides continuous learning and adaptation, and NLP provides machines the ability to understand human language. This trinity is a huge technological synergy driven by big data processing—a data volume that humans are incapable of handling. Their ability to analyze, interpret, and understand a large expanse of data is small, and in big data analytics the volume of data can get all the way to zettabytes.

The question of over-dependence on machines or machines taking over control is worrisome in many segments of society. There is already the argument that though technological advancement underpins modernization, there are inevitable job losses. This argument is also defended by the argument that new jobs are being created. Another ethical dilemma, then, is what happens to those who are unable or unwilling to keep up with the rising technological advancement?

Autonomous decisions, Autonomous Things, Lethal Autonomous Weapons, Self-Driving cars, Artificial General Intelligence and Unemployment were considered important areas of ethical dilemma of AI. Who is to be held responsible for an accident occasioned by a self-driving car? It obviously will not make much difference if the car is held liable. The possibility of AI doing what it is not intended to do is inherent in it because it does not share our values and concerns. Hence the potential outcome of an AI product can create a problem that may in itself be new. Also, many AI products on the high end are costly to produce. This inadvertently makes some AI products end up in the hands of the rich. This feeds the flamboyant lifestyle of the rich while the poor are unable to enjoy the same privilege. In the case of Lethal Autonomous Weapons, who can access AI and to what use will the product be put, becomes a question begging for answers and portends increasing danger.

Natural Language Processing

The Natural Language processing component involves Natural Language Understanding and Natural Language Generation. The former deals with reading and interpreting language while the later is about writing and generating language.

To develop an NLP involves sentence segmentation, word tokenization, stemming, lemmatization, identifying stop words, dependency parsing, part of speech tagging, Named Entity Recognition, and chunking. These processes take place within the phases of NLP as depicted in figure 3.

Morphological and Lexical Analysis

Lexicon is the vocabulary used in a language in the form of words and expressions, and morphology depicts the analysis of the description. In NLP, this analysis leads to the categorization of the text into words, paragraphs, and sentences.

Syntactic Analysis

This step deals with the analysis of the words in the sentence to reflect upon their grammatical structure. Using NLP, the words are put in such a structure whereby it can be shown how they are related to each other.

Semantic Analysis

Semantics is concerned with the literal meaning of words or phrases. In this step, the structuration obtained in the previous step is the given meaning, and abstractions are minimized.

Discourse Integration

At this stage, NLP focuses on the sense of the content. It highlights the association of sentences together and how the earlier sentences influence the meaning of the later ones.

Pragmatic Analysis

This is the last step in the working of NLP. It is concerned with overall communication and world knowledge. It is ensured that what is being said is replicated to what it means within the NLP system.

The problem of ambiguity (lexical, syntactic and referential) is still an ongoing discuss for natural Language Processing. This provides avenues for ethical concerns. The difficulties that ensue in tackling these ambiguities portends ethical dilemma and can make developers lacking ethical discipline for the haul to ensure that the right things are done for a robust, effective and efficient system to drop the ball.

Application of Natural Language Processing

Natural Language Processing has been put to several worthy uses over the years and its application is becoming ubiquitous in our daily lives. Some of them are:

Machine Translation (MT)

Google translation is an example of wide use NLP tool. MT aims to capture the tone and meaning of the input language and its translation to text in the desired output language.

Chatbots and Virtual Agents

Although needing continuous improvement, Chatbots and Virtual Agents are applications that create responses that sound natural in communication. Chatbots for instance figure out how to perceive context-oriented signs/language about human demands and utilize them to give continuously improving reactions or choices over time.

Detection of Spam

Machine learning and NLP spam detection techniques are used to scan emails for language that indicates scam or phishing.

Social Media Sentiment Analysis

NLP has become a major application for the deconstruction of hidden insights from the language used in various social media posts. It tracks how various tools for advertisement, promotion, opinions are created through the usage of a specific language.

Other application areas include Spell checking, Information extraction, Advertisement matching and Speech recognition.

Bias: An Ethical Conundrum in Natural Language Processing

Technologies such AI and NLP would have come across as objective and not subjective if they existed of their accord but they are a subject of human inventions, intentions and innovations. Hence, subjectivity is often passed into these technologies (sometimes inadvertently). There is a need to check data selection bias and demographic bias throughout the data life cycle. Annotation process throws label bias which is even worse when it involves crowdsourcing as the perspective or worldviews of the various people involved may subtly get in the way of what is right and what is wrong. Input representation is faced with semantic bias which is entrenched in societal bias. On the side of the models comes bias over-amplification which can happen as a result of the model being deployed or overfitting of the model of choice. Most NLP are tilted towards English language for ease of obtaining data resources but the question of bias predilection that comes with the English language remains something to contend with.

Conclusion

There has being a lot of ongoing research on ethical issues and the dilemma they portend in artificial intelligence. Though the issue of biases in NLP can be subtle as to go undetected and still remains dangerous, it is a fixable problem. A growing number of research is now focusing on Natural Language Processing. This is not a surprise since NLP is crucial to the functioning of a large part of the AI landscape. Hence, asking the right questions of ethical nature, in addition to questions that push for technological innovation, are essential to developing less biased AI (NLP). Self-empowering and autonomous technologies must be handled with care clauses that can constantly ensure that technologies are under ethical check. There must be ongoing discussions on technology usefulness or predilection for creeping violations of the right to privacy which remains one of the fundamental rights of all humans. The question of 100% security and 100% privacy remains an ethical seesaw often settled with some level of tradeoff. Also, the question of technology having surpassed the scope of ethics remains a debatable one. There is, however, the need for ethics in the face of limitless potentials that must be harnessed from technology.

Cite this article in APA as: Sunday, O. (2022, September 14). Ethical dilemma in artificial intelligence: A focus on natural language processing bias. Information Matters, Vol. 2, Issue 9. https://informationmatters.org/2022/09/ethical-dilemma-in-artificial-intelligence-a-focus-on-natural-language-processing-bias/

Before now I had no Idea Natural Language Processing was heavily involved in the concept of ethics and morals of computers. This has opened a new perspective of NLP on the concept of Ethical perplexity